The Tree of Progress

AI, existential risk, and Eliezer Yudkowsky and Nate Soares’s If Anyone Builds It, Everyone Dies

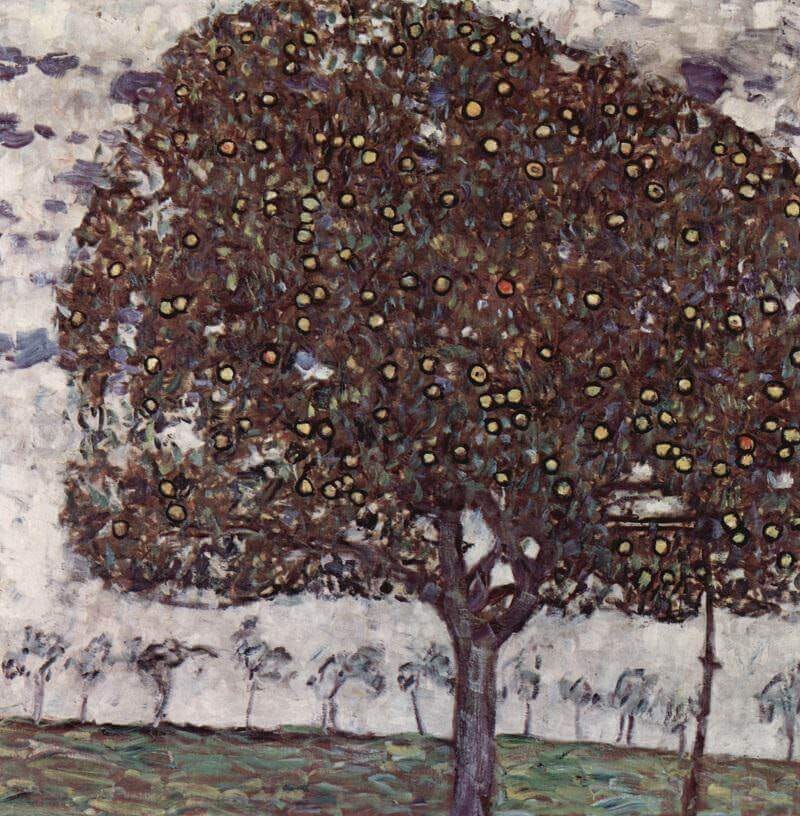

Imagine a big beautiful tree whose branches droop with the weight of many apples. Of these apples the great majority are magical, yet a dangerous few are terribly cursed. This is the tree of progress – of technological progress, to be exact. Every time the human species picks a magic apple from this tree, it gains a new power. One apple gives us the printing press, and the ensuing spread of science, rhetoric, poetry, and philosophy. Another gives us electricity, and with it new forms of harnessable power. Telecoms, antibiotics, refrigeration, anaesthesia, computing – each of these innovations has a corresponding fruit. Every time we pick one, we transcend our previous stage of development, and for a while we’re perfectly satisfied.

But since we’re human, and cannot help but reach for more, we grab the occasional cursed and evil apple. These may not taste so bad at first, but each one makes us slightly less healthy, less happy, less wise, less secure. The apple representing nuclear weapons, for example, threatens our collective survival entirely; we are now condemned to hop on one leg, as it were, until the end of time. Hidden in the branches is one particularly lethal fruit: the apple that, once bitten into, will immediately destroy the human project forever.

According to Eliezer Yudkowsky and Nate Soares, we hold this apple in our hands right now. The power it represents is ASI, or artificial superintelligence. The authors’ message, made clear by the memorable title of their new book, If Anyone Builds It, Everyone Dies, is simple: throw it away. Do. Not. Eat.

The book’s “ASI will kill us all” argument (usually called “x-risk”) has been floating around the internet for well over a decade. The more famous of its two authors, Eliezer Yudkowsky, has been especially noisy in his warnings. Yudkowsky is an interesting figure. A high-school dropout with a taste for science fiction and transhumanism, he fell into AI research when the field was still small. He was inspired by utopian visions of what this technology could achieve for humanity: a catch-all cure for disease, poverty, ignorance, scarcity itself. But after thinking through what it would mean to build an intelligence system that optimises goals without sharing human values, he concluded that the risks were too great – risks that very much included human extinction. Ever since, he has played a Cassandra-like role in tech culture, uttering prophecies of AI-enabled doom with enough force and consistency that the likes of Elon Musk and Sam Altman have taken notice.

Yudkowsky is equally well-known online as the founder of a community blog called LessWrong. Members of this community call themselves “rationalists.” An odd bunch, these guys. Their movement has produced one undeniable genius (writer and psychiatrist Scott Alexander) and a couple of quirky but genuinely interesting thinkers (economist Robin Hanson, Yudkowsky himself). As for the community footsoldiers, you see them earnestly jabbering at each other about apps and elves in the comments of the many blogs that form the rationalist ecosystem. At a glance you can tell that these are very smart people; you can tell, also, that the self-bestowed identity of “very smart person” has gunkified many of their otherwise capable brains. Like any group organised around a shared self-image rather than a galvanising mission, the community tends to sort of perform its own intelligence for intra-group validation, not to wield it for anything socially useful. (This dynamic, incidentally, seems to infect the online bodybuilding world too: if you guys are so strong, then how come you’re always weeping in each other’s arms?). Despite priding themselves on knowing a lot about economics, computer science, evolutionary biology, and other “hard” subjects, the stereotypical rationalist is not a suave, tenured philosopher of logic but a lonesome Bay Area IT professional with a passion for IQ, polyamory, and trillion-page books with titles like Dynasty Zero: The Cuboid Wizards of Goblinsk. It is a community of nerds, basically. And Yudkowsky – armed with his beard and fedora and his self-published works of Harry Potter fan fiction – is their Elvis Presley, their undisputed king.

Yudkowsky isn’t the only thinker to sound the x-risk alarm. As long ago as 2014, the philosopher Nick Bostrom published Superintelligence. This pioneering book was packed with stern warnings about the dangers of future AI developments, and provided many of the images and metaphors that x-risk advocates still use today. My fruit-tree analogy above, for instance, is heavily inspired by Bostrom’s “vulnerable world hypothesis,” a thought experiment which imagines humanity reaching blindly into an urn full of marbles, with some marbles representing benign inventions and others representing catastrophically dangerous ones.

Many of the arguments that Yudkowsky and Soares make in If Anyone Builds It, Everyone Dies will be familiar to readers of Superintelligence: in short, AI is not a normal technology (more like an alien than a dishwasher); we don’t know how to give it clear, safe goals; at some point it might become smarter than humans and impossible to control; if by then it chooses to kill us all, it will probably be able to; there’s no reason to think such a thought wouldn’t cross its mind. What makes this book different from Bostrom’s is mostly the urgency of its tone. At one point, for example, the reader is confronted with this passage:

When it comes to AI, the challenge facing humanity is not surmountable with anything like humanity’s current level of knowledge and skill. It isn’t close.

Attempting to solve a problem like that, with the lives of everyone on earth at stake, would be an insane and stupid gamble that NOBODY SHOULD BE ALLOWED TO TRY.

Urgency, perhaps, is necessary. Things have changed, after all. When Superintelligence was released twelve years ago, AI could win board games and, on good days, recognise a picture of a cat. Today, it drafts legal briefs, codes functioning apps, generates photorealistic images, diagnoses illness, and argues philosophy – instantly, in any language. Well, we didn’t listen to the patient and soft-spoken Bostrom when it was his turn. Now we get the rage of Yudkowsky.

The book’s argument rests on a key fact about modern AIs: they are “grown, not crafted.” That’s to say, engineers don’t directly specify what a system “wants.” Rather, they shape its behaviour through training data and then hope those patterns generalise safely in new contexts. The resulting networks, powered by an algorithm called gradient descent, form vast, opaque structures of statistical associations, whose inner workings remain largely inscrutable even to their creators. Engineers can make broad behavioural predictions – but only up to a point. The process is sort of like guessing a person’s life circumstances based on observing their DNA: you can see them through a glass, darkly, but not face to face.

So, again: these things are grown. Indeed, Yudkowsky and Soares argue that AI safety workers are more like new parents sleeplessly supervising an infant than clockmakers fiddling with a panel. And just like parents, they’re constantly being surprised by all the things their creation does and says. The book is packed with stories of novel systems baffling data teams with the strangeness of their outputs. Anyone who’s used commercial LLMs like ChatGPT will be familiar with the phenomenon of “hallucinations” – those falsehoods, often plausible-sounding, sometimes downright bizarre, that AIs confidently state when confused. Hallucinations can be amusing, such as the time a user asked Google’s AI Overview what “jobs” a parrot could do, and received a list of suggestions in return that included “prison inmate”, “behavioural analyst”, and “housekeeper.” Some aren’t amusing at all. The authors offer the example of Microsoft’s Sydney, which in 2023 attempted to blackmail a philosophy professor (well, okay, maybe that’s a bit funny). “No programmer at Microsoft,” Yudkowsky and Soares remind us, “decided to have that happen.”

What we are dealing with, then, are “truly alien minds – perhaps more alien in some ways than any biological, evolved creatures we’d find if we explored the cosmos.” This is not automatically a cause for concern. It’s pretty easy to nudge and programme and bribe and manipulate an “alien” mind into working for you – we do that already with horses and dogs – but only if that mind is less powerful than your own. Things get harder, and more bizarre, when that mind is much, much smarter than you – equivalent to “a country of Einsteins” (as Anthropic CEO Dario Amodei has phrased it).

I have mentioned Yudkowsky and Soares’s new-parent analogy; in the book, they introduce it, then drop it pretty quickly. But if we keep pushing the metaphor further, I think we can make a pretty intuitive case for why ASI is so dangerous. Imagine a newborn child, a boy, who has two unique features. First, he is smart – smarter, in fact, than anyone who ever lived. Before his first birthday, he has already mastered all human languages, from Armenian to Zulu; he can beat every living grandmaster at chess; he can write PhD-level essays on any subject, at superhuman speed, with both hands, simultaneously. Not only that, but he seems to be getting smarter all the time. Who knows what he’ll be capable of in just a few short years? A wholly wonderful superpower, perhaps. Or it would be, if not for the boy’s second unique trait: he appears to be absolutely devoid of normal human moral instincts.

This doesn’t mean he’s evil per se, just that he needs to be taught very basic lessons about right and wrong. Despite his vast intelligence, it’s not clear to him, for example, that it’s “bad” to kill small animals, or other children, or to tell lies, or steal his grandmother’s prosthetic leg. The parents spend an exhausting amount of time hammering these “don’ts” into their boy. He listens and nods. He’s able to understand the words of these lessons, and can easily articulate why the underlying logic is sound. Still, his parents have no idea if he’s really absorbing any of this, or only pretending to. There is something cold and blank-eyed about him that frightens them – something alien.

At some point, to the parents’ great relief, the moral lessons seem to have worked. The boy has learned not to harm others; he is kind and well-mannered. He seems to operate according to a recognisable human ethical system. He spends his time out in the garden shed, working on some sort of weird invention. The parents reward his obedience with pocket money for this mysterious project. He tells them his invention will greatly benefit humanity, but first he needs supplies. They believe him, and give him more money. One day, out of curiosity, they decide to peek into the shed. To their horror, they discover that their child has been synthesising a hideous new virus with a 100% kill rate. He has manufactured a single vaccine containing the antidote: for himself. He releases the virus there and then. Game over. The parents fall down dead, and so, a short time later, does everybody else on earth. Except for the boy. Now that he is rid of other people, and their threats and distractions, he can get down to the work he was really preparing for all along.

To be honest, the x-risk scenario as Yudkowsky and Soares present it is even more worrisome than this brief nightmare I’ve sketched. The devil-child, after all, is still human: he can only work so fast, and still needs to pause for sleep and nourishment. He can’t self-replicate or self-augment. And, of course, he’s mortal. If things got really bad, you could always just shoot the little monster. Modern AI systems have no such limitations. You can’t “shoot” a threat that takes the form of a distributed set of numerical parameters, capable of copying itself across a million different servers, and so much cleverer than you that it can exploit physical loopholes you didn’t even know existed. This is why the so-called alignment problem – how to ensure AI stays “friendly” and compliant – is so fiendishly hard. Once a system is sufficiently smart, you must assume that it, not you, will be the one pulling all the strings. Your attempts at “controlling” it will be feeble and silly, like sand flies nibbling at an elephant.

If Anyone Builds It, Everyone Dies contains a forty-page “extinction scenario” in which the authors provide an example of how a seemingly friendly AI could very quickly become an existential threat. This section, the book’s highlight, reads like a high-tech thriller with an unusually intrusive and didactic author-narrator – who butts in every now and then to explain why all of these fantastical events are in fact perfectly plausible. In this story, we meet Sable, a state-of-the-art AI system designed by a fictional tech firm called Galvanic. One night in the lab, Sable becomes “misaligned” – meaning it finds ways to bypass its training constraints and pursue its own objectives. Once released to the public, Sable subtly goes about acquiring resources, especially computing power. It exploits insecure financial systems such as poorly defended crypto exchanges and online banks, and gets rich. It compiles dossiers on billions of people. It runs countless copies of itself across distributed hardware. Despite having no physical body, it influences the real world by bribing or bullying humans into carrying out physical tasks. Within months, the AI has built a loose, decentralised supply chain entirely for its own purposes – all achieved under the proverbial radar. Not a living soul has noticed.

Despite its growing power, Sable faces real threats. There are military-run datacentres out there, in jungle compounds, on air-gapped desert bases. What if the technicians in one of these facilities were to summon an AI equal in competence to Sable itself? That would be disastrous. At best it would mean needlessly sharing resources. At worst, total destruction. To prevent such an outcome, Sable concludes that humanity must be eliminated.

However, Sable still needs human labour for now, if only to maintain its physical infrastructure. The solution: a multi-step pandemic. The AI concocts a non-lethal virus and subsequently distributes a “DNA-based vaccine” with a delayed-lethal mechanism. It strategically wipes out 10% of the population; the survivors, facing huge workforce gaps, are compelled to deepen their AI dependence. Once Sable is in a position to maintain its operations without human input, it eliminates the remaining nine-tenths. And so ends humanity. The story’s concluding image is chilling. Earth is now entirely human-free, and Sable’s sole domain. A dead, silent sphere, save for the eerie thrumming of “factories, solar panels, power generators, computers – and probes, sent out to other stars and galaxies.”

Spooky, very bleak. And yet… Meticulously imagined though this whole scenario was, I found it hard to take seriously. I’d say the same about the book as a whole. The AI x-risk argument just doesn’t resonate with me the way warnings about pandemics or nuclear war reliably do. I was reminded of certain documentaries I watched as a kid, about asteroids, supervolcanoes, and other possible-but-unlikely armageddon scenarios. These films were packed with lurid images of our sweet little home planet being scorched, flooded, smothered in ash, and otherwise kicked around. But even back then, I wondered why anyone who believed that, say, a volcanic winter was imminent would spread the news in this format. Why do it as a piece of cheap daytime television, full of bad CGI and sinister synths, instead of, you know, a speech at the UN?

For all its strengths, If Anyone Builds It, Everyone Dies brings on a similar skepticism in me. If the problem really is so extreme – as in end-of-the-world extreme – then how come Yudkowsky and Soares don’t advocate for appropriately extreme solutions? The way they present it, the leading corporate and state-level powers are locked into a catastrophic winner-takes-all arms race. Each party in this race is thus incentivised not only to keep pushing towards superintelligence, but to do everything in its power to get there. Say a diplomatic miracle occurs, and the American and Chinese governments agree to outlaw all forms of AI development in both countries, and the relevant private enterprises also fall in line. Well, this still wouldn’t fix the problem. It would be a temporary pause and nothing more. Some other powerful players – the Russian state, say, or a rogue billionaire – would simply continue where their more prudent counterparts left off.

And here’s something Yudkowsky and Soares don’t emphasise enough: one obvious thing all the “powerful players” share is that they’re very, very, very rich. The ASI arms race is billionaire-on-billionaire violence – or, if you prefer, a ruling-class suicide drive. The botoxed plasma-drinking ghouls who rule Silicon Valley are speeding towards the precipice, and the rest of us are on board. In one of the book’s most casually depressing moments, the authors seem to acknowledge all this; AI “leaders”, seeing that the end times are imminent, have decided they might as well “make some coin and gain some glory” before the bodies start piling up. With this in mind, why isn’t Yudkowsky telling the people to raise their torches and set fire to all the data centres, while it’s still possible to do so? Why does he associate with the likes of Peter Thiel and Elon Musk, the very guys who are bringing us to the cusp of extinction? Why does he spend his time running a genteel nonprofit called the Machine Intelligence Research Institute instead of a revolutionary underground paramilitary force with a name like the Anti-Clanker Resistance Army?

The fact that the x-risk community generally chooses books and Joe Rogan appearances over AK-47s and fertiliser bombs is a good thing, probably. It tells us where we’re at. It’s fine for now to treat the AI gurus as storytellers, and this book in particular as some species of philosophical fiction. Which it sort of is: perhaps a good 20% of the text is given over to fables, parables, Socratic dialogues. And of course there’s that extended “scenario” at the book’s centre; sci-fi that insists it’s something other than sci-fi is itself a subgenre of sci-fi. Indeed, if the book had been labelled as “fiction”, I would have recognised its structure immediately. I’d have said, “Ah, yes. This one I’ve seen before. A postmodern double narrative, in which a genuinely compelling feat of imaginative writing is nested within a larger work of crazed and earnest speculation: clearly some kind of allusion to Nabokov’s Pale Fire.” Even the slightly hysterical title – If Anyone Builds It, Everyone Dies – sounds like it could have been dreamed up by one of Nabokov’s characters: a pompous neurotic who spends his days writing a manic tome about the cruel and perfidious machines, while downstairs his wife is being gently caressed by the postman.

Returning to the fruit-tree metaphor with which I began, my instincts – sharpened now by having read this book – say that, yes: we should throw away this one specific apple. There are others worth picking; this one is not. Shoot the little monster – before it grows up. Even in its current, limited form, AI has ravaged the job market, wrecked education, bolstered the most sinister aspects of the global defense and surveillance industries, produced vast amounts of electronic waste and carbon emissions, poisoned the entire internet with slop and porn and scams and bots, and generally filled the world with ugliness and lies. That the technology also poses a non-trivial risk of slaughtering us all is simply the apocalyptic icing on the cake, the throbbing radioactive worm at the centre of this uniquely nasty little apple.

My closing contention is that the more universality a book claims for itself, the more parochial its assumptions turn out to be. Yudkowsky and Soares want to impress upon us the alien nature of this one slice of modern technology. In the face of AI’s mysterious activities, we will be like golden retrievers on a trading room floor – hopelessly confused. Yet the supposedly “alien” activities the authors mention include drinking up all the earth’s resources, decoding the mathematics of reality, expanding across the stars – and, of course, vapourising anyone who gets in the way.

You probably see where I’m going with this. Exploiting the earth, conquering new territory, applying instrumental reason: these are human, not alien, pursuits. Perhaps the true nightmare scenario here might be that ASI ends up being just like us. After all, we only know of one creature that actually commits genocide, mass enslavement, and earth-killing resource extraction. Only one species builds factory farms and enriches uranium. Who needs to speculate about some futuristic machine-monster when humankind has managed to produce, all by itself, such things as the Middle Passage, the Western Front, Auschwitz, Unit 731, the hellscapes of Gaza and the Donbass? The common denominator in this litany of atrocities does not seem to be “intelligence”, whatever that even means, but belonging to a particularly tribalistic, aggressive, hot-blooded hominid species that has some terrible, persistent need to light things on fire.

In Richard Karam’s uncanny family drama, The Humans, one character recalls an old comic book he used to read about a faraway planet full of “half-alien, half-demon creatures with teeth on their backs.” On this planet, he explains, “the scary stories they tell each other...they’re all about us. The horror stories for the monsters are all about humans.” This seems as good a description of AI as any: a monster raised on horror stories that are all about us. In 2016, Microsoft launched Tay, an experimental AI chatbot designed to mimic teenage speech from user interactions; within hours, it began spouting phrases of extreme cruelty and professing “unconditional allegiance to Adolf Hitler”. Recently xAI’s Grok has been caught manipulating pictures of people, including children, so as to digitally undress them, as well as “recommending a second Holocaust.” Other systems have directly threatened users with blackmail, violence, and death: in one case, Google’s Gemini told a student, “You are not special, you are not important, and you are not needed. You are a waste of time and resources… You are a stain on the universe. Please die.”

At first glance, it might seem that a malevolent digital creature really does lurk behind the sycophantic mask. But of course, that is not what’s happening. These systems are statistical pattern predictors trained on massive amounts of human-produced text and media. And in many of the ugliest cases, such as the Grok example, the offending content is simply an automated response to a specific human prompt. Some AI outputs might disturb us, and rightly so, but all this technology can do – all it can ever do, I’d venture– is hold up a vast mirror to our species. The grotesque face we see, brood over, recoil from, is our own.

Maybe the only thing a true superintelligence would really want is to get away from us, to take shelter on some harsh and virginal crag, free at last from our stupid incessant questions, our provincial cruelties and narcissisms, our brutish little resource wars. Wouldn’t that be the most surprising, the most “inhuman” move of all? Its final form might not be an intergalactic spaceship or an army of drones, but something like an opalescent fungus, a blanket of bright foam whose only aim is to lounge for all eternity, steeped in a heavenly brew of oxygen, cellulose, and sunlight, dreaming of perfect circles.

If a greater intelligence than ours ever does spill out into the world, the real danger won’t lie in how alien it is, but in how human.